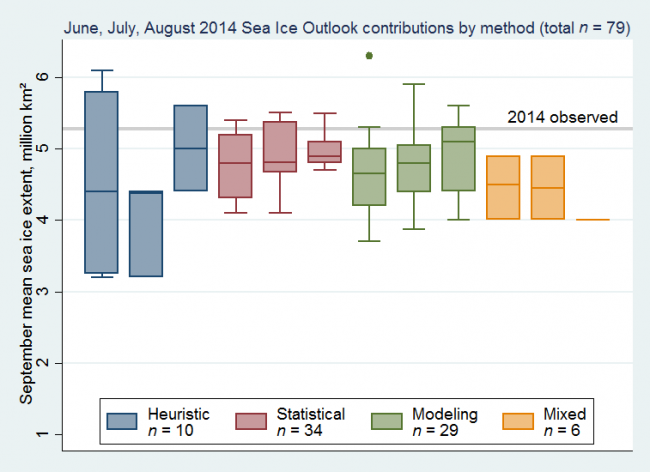

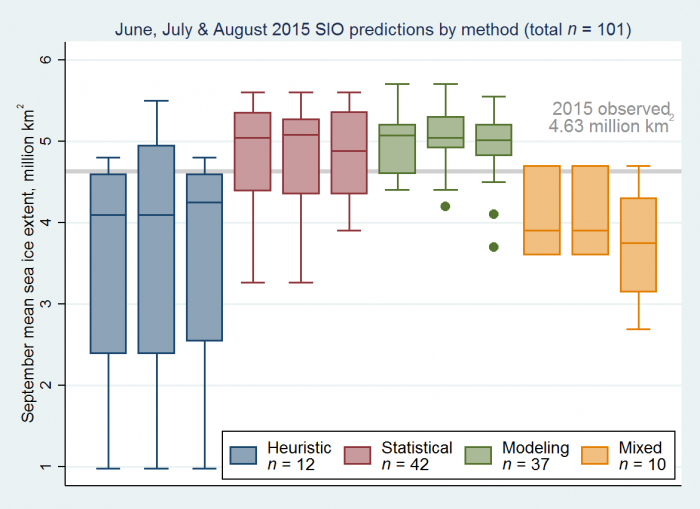

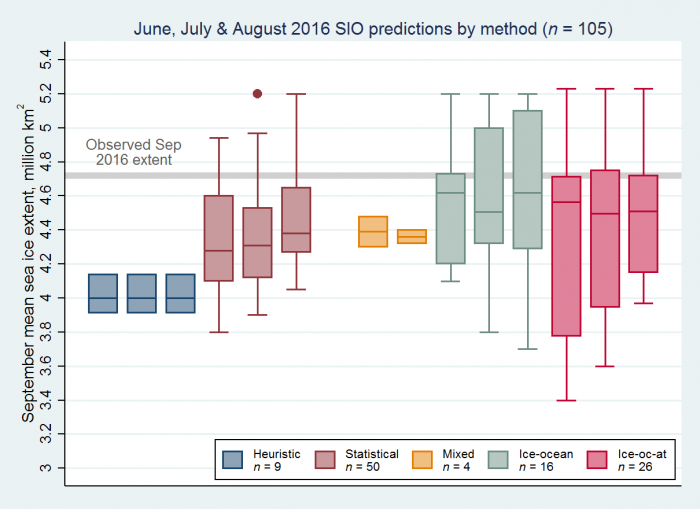

An excellent idea, you might well have thought. But that leads me to look at the annual Sea Ice Outlook (SIO) aka SIPN. Here are some pix:

2014:

2015:

2016:

[2013: everyone underestimated. 2012 was massively low, and everyone overestimated.

If you’d just predicted from extrapolating the OLS line-fit, then 2015 and 2016 would have been very close, and 2014 would have been within ~0.4. I think that OLS extrapolation should be considered the “null method” (comparable to weather forecasting, where the null method is “the same as yesterday”). Measured against that, all the techniques fail; at least when looked at in this aggregated scale. I’m not trying to suggest it is an easy problem, mind you. The lack of significant improvement in forecasting three, two or one months ahead is notable, too. Except maybe for the models, in 2016? But that could be chance (I mean, chance in the sense of having a situation amenable to being modelled). The SIO summary for 2016 does say The SIO reports should start making some comments on forecast skill for those teams who have submitted forecasts for many years, or for those teams who submit or have published the skill of retrospective forecasts. Which does sound like a good idea. Does anyone else make any attempt to assess these?

This year’s extent is shaping up to be dull.

Refs

* JA reviews “Practice and philosophy of climate model tuning across six US modeling centers”.

* Delingpole freezes over.

* Reality Only SEEMS to be Easier to Understand If It’s Interpreted as a Battle Between the Forces of Good and the Forces of Evil.